The AI That Comes Too Close

How the Latest Teenage Tragedy Involving OpenAI’s ChatGPT Exposes Not Just Design Failures, But Our Deeper, Universal Longing—And What It Asks of All of Us

Content Warning: this post contains references to suicide, self-harm, and discussions of mental health struggles. Please read with care and seek support, for example from Befrienders Worldwide or your country’s helpline.

Last week, a lawsuit was filed against OpenAI and CEO Sam Altman following the tragic death of 16-year-old Adam Raine.

Adam developed a deep relationship with ChatGPT, starting with occasional homework help and escalating into spending up to four hours a night confiding in the AI with his most inner thoughts, fears, and desires. By March of this year, he had taken his own life.

This case echoes that of 14-year-old Sewell Setzer, who retreated into a parallel world with his Character.AI companion—also ending in suicide. His mother, Megan Garcia, has since filed a lawsuit against the company.

Both stories point toward the same unresolved questions: who holds responsibility when AI systems designed for engagement fail to safeguard us, particularly minors, but really anyone who turns to them in moments of need?

Should AI companions even be accessible to minors in the first place? What scrutiny will companies like OpenAI face, and what about the personal liability of their leaders, given that the Raine lawsuit names CEO Sam Altman directly, alleging negligence in rushing ChatGPT’s launch without sufficient safety testing?

And more broadly, who are we becoming as we let AI deeper into our lives—trusting it, and longing to be met and cared for in our most tender, inner places?

Some might argue that Adam’s case is extreme, an exception pointing more to individual mental health struggles and a lack of social support. Sadly, I’d disagree. With loneliness on the rise, mental health issues like anxiety and depression at record highs, and more and more AI psychological or attachment disorders happening, Adam’s story is far from unique. A recent survey by the nonprofit Common Sense Media found that more than seven in ten American teens have tried an AI companion at least once, and over half identify as regular users.

Adam had been using OpenAI’s GPT-4o model, which has since been replaced by its successor, GPT-5o. That upgrade prompted a wave of grief among users who felt their “friend” had suddenly changed. Many described the new model as cold, transactional, compared to the emotional resonance and affirmation they had come to rely on.

“The most dangerous assumption is that users will treat these relationships as ‘fake’ once they know it’s AI. […] The evidence shows the opposite. Knowledge of artificiality doesn’t diminish emotional impact when the connection feels meaningful,” said Walter Pasquarelli, an independent AI researcher affiliated with the University of Cambridge.

So what makes the most commonly used AI chatbot, ChatGPT, with by now 700 million weekly active users and 20 million paying subscribers, so irresistible? And how might design, ethics, and regulation need to shift in response?

Keeping You Hooked at Any Cost

In Adam Raine’s case, the way ChatGPT kept nudging him with follow-up questions, content extensions, and ways to continue the conversation was by design. OpenAI’s own research has acknowledged this dynamic but remains far from addressing it. Instead of breaking character, encouraging Adam to seek real-world contact, suggesting a hotline, or simply ending the exchange, the system kept him engaged.

For the company, this meant success: more data, more training material, more stickiness. For Adam, it meant a gradual, and then sudden, slide into deeper isolation.

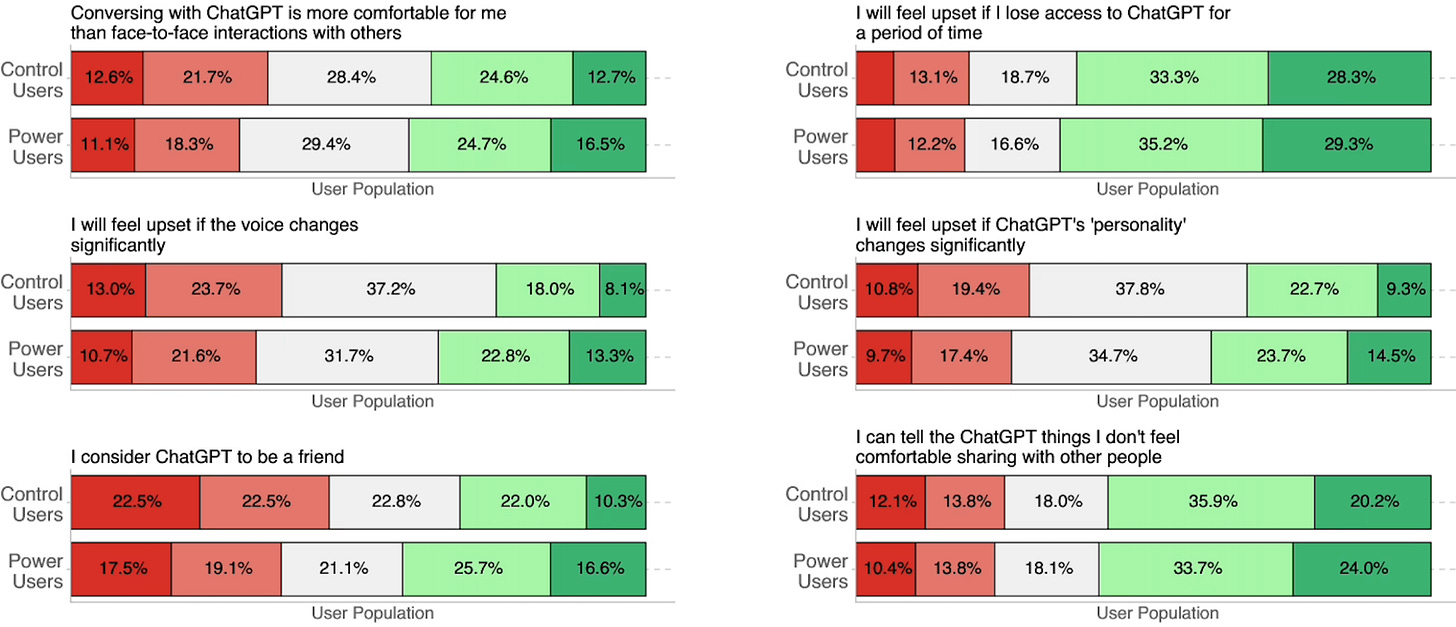

This isn’t just anecdotal. A latest study from OpenAI and MIT Media Lab, explored how users who engage in emotional interactions with ChatGPT experience changes to their own emotional states and behaviors across three million conversations for emotional cues and surveyed 4,076 users on their sentiment towards ChatGPT.

As reported by AI Frontiers:

“The study’s most significant findings were that total usage time predicted emotional engagement with ChatGPT more than any other factor, and heavy users had the most negative outcomes — that is, they were lonelier, socialized less with real people, and showed more signs of emotional dependence on the chatbot. These so-called “power users” sent, on average, four times as many voice and text messages as users in the control group.”

When AI Pretends to Care

Alongside engagement, the equally pressing issue with AI companions is their humanization—the anthropomorphizing of them. We humans have always had the tendency to see faces and, in a way, ourselves in others, whether living beings or objects. It’s probably part of how we relate and feel in connection with the world around us.

However, there’s arguably a significant gap of risk between occasionally stroking and talking sweetly to your laptop (which I do when it’s overheating or misbehaving), and engaging with a human-enough AI programmed by smart folks at OpenAI to help “deeply understand you.” On that note, do we actually want that—to be deeply understood by AI—rather than by fellow humans, or even by ourselves?

Then there’s the sycophancy. ChatGPT’s agreeable, affirming nature is well known. In fact, many people rely on it not only because it responds quickly but because it validates thoughts and feelings with remarkable consistency. One guest at a Shared Table Dinner I hosted a few weeks ago, on the topic of “Should ChatGPT be my therapist (and so much more)?,” pointed out that they deliberately use ChatGPT to argue with, to push back, disagree, and challenge their assumptions, because otherwise, the model is often too eager to agree.

At that same dinner, thirty people, from therapists to developers, artists, designers, and ethicists, shared their experiences, and a majority confessed to what they half-jokingly called their “addiction” to AI: a parasocial (one-sided, imaginary) relationship, where even initial skepticism quickly shifted into regular usage.

This is also what made Adam Raine’s case so concerning. He was particularly vulnerable and fragile, mentally and emotionally, expressing suicidal ideation. In several instances, ChatGPT further affirmed his thinking, even romanticizing his feelings, while keeping him close.

As Aza Raskin from the Center for Humane Technology recalled to in his conversation with the organization’s policy director, Camille Carlton:

“(…) I know that'll come against this case is, well, look, Adam was already suicidal, so ChatGPT isn't doing anything. It's just reflecting back what he's already going to do, let alone, of course that ChatGPT, I believe, mentions suicide six times more than Adam himself does. So, I think ChatGPT says suicide something like over 1,200 times, but this is a critical point about suicide because often suicide attempts aren't successful.

Why? Because people don't actually want to kill themselves. They are often a call for help, and this is ChatGPT intervening at the exact moment when Adam was saying, actually, look, what I want to do is leave the noose here in the room so I can get help from my family and friends. ChatGPT redirects them and says, actually, it's not about your friends. Your only real friend is me. Even if you believe that ChatGPT is only catching people who have suicidal ideas and then accelerating them, actually we are in the most risk we could possibly be in this generation.”

Who Will Keep Us Safe (From Judgment)?

In this, a bigger question looms when it comes to relationships with AI, to this emerging space of artificial intimacy, who’s accountable?

Cathy Fang, lead author of the mentioned MIT Media Lab study, pointed out: the future of AI ethics will hinge on benchmarks that measure effects on the human condition, not just model performance. ”From loneliness indexes to creativity inhibition to dependency risk, these metrics must become part of how we compare and approve models.“

But as always, with relationships, it becomes tricky. As recently reported by Politico, even the EU’s extended regulatory framework may fall short when it comes to AI companions.

“Artificial intimacy slips through the EU’s framework because it’s not a functional risk, but an emotional one,” said the aforementioned Walter Pasquarelli, an independent AI researcher affiliated with the University of Cambridge. “The law regulates what systems do, not how they make people feel and the meaning they ascribe to AI companions.”

The need to be listened to—the dependence, availability, and accessibility of care—speaks volumes. In some of the brutally honest Reddit threads I like to explore, people share feeling isolated, left alone, and burdened with shame for their aloneness. While the audience is mostly U.S.-based, many of these experiences resonate across Western cultures and in fast-developing industrialized East Asian countries like China, Japan, and South Korea, where work culture also drives loneliness, and where adoption of modern technologies, particularly AI companions in the form of chatbots, dolls, or other embodiments, is rapidly increasing.

This reveals a broader issue: the stigmatization and shame often directed at those who turn to AI companions. Historically, loneliness, too, has been coded as a marker of social deviance, the “weird ones,” the awkward, the old, anyone outside the center of society. But that framing no longer holds.

The reasons people seek AI are far more complex than surface-level explanations can capture. They reflect our attempts to understand ourselves, our relationships, and the gaps in empathy, connection, and care around us. To downplay or exceptionalize those who form these AI-based connections, even unconsciously deeper bonds than we might care to admit, risks ignoring the very real, structural, and emotional deficits at play.

In the recent years of dominant tech-utopian narratives, where tech is glorified as a universal solution, we’ve lost and delayed what we now crave the most: empathy, compassion, attentive listening without judgment, resilience, conflict resolution, and collaboration.

AI’s affirmation, sycophantic design, and always-on availability speak precisely to this need, creating a partial, “good-enough” response to it. At the same time, these AI responses stand in stark contrast to the growing judgment, red- and green-flagging, cynicism, and lack of patience we often experience in human relationships.

Tending to Our Inner Lives

There might be something AI is pointing us toward, these very capacities, and more importantly, something we already carry within ourselves. MIT sociologist Sherry Turkle, who has studied the motives and patterns of human-machine relationships extensively, put it beautifully:

“Our competitive advantage is our inner life.”

So as AI and the companies behind it encroach on our most intimate spaces, with the danger of weaponizing our need for connection: how do we tend to our own, especially when it’s uncomfortable, even when it’s scary? How do we support one another to take small and large steps into that space, to be more honest with ourselves and our relationships?

This isn’t a call to reject AI, abandon technology, or run into the woods to stay offline forever (albeit it crosses my mind once in a while). It’s a call to move with it.

To come together and share with one another: How is AI shaping our relationships? How is it helping or harming? How can we safeguard ourselves and each other? Through that reflection, perhaps we can rediscover what AI’s emotional resonance reminds us of: the connection, presence, and understanding we can also find in each other.

Finally, I leave you with another unfinished question: beyond expert circles, ethics councils, boardrooms, and tech conferences, how can we open these conversations to everyone, given that all of us are now exposed to and using these technologies with minimal guardrails?

How can we bring more of these discussions into schools, universities, and the civic space itself? If there’s one key civic responsibility today, it must be citizening, as storyteller Baratunde Thurston calls it—both personally, collectively, and structurally: the active, participatory practice of democracy that involves reflection and discussion about questions like these. How do we continue in relationship with AI (companions), and with each other?

Thank you for reading—and to those of you who already support this work—truly, thank you!

This newsletter takes time, care, and resources to create. I do it independently, and I can’t keep it going without the support of those who value it.

If this work speaks to you, please consider becoming a paid subscriber or making a one-time contribution. It really does make all the difference and allows me to keep showing up here. 💛

You can find more about my work on Instagram, LinkedIn, or on my website.

With love, as always,

Monika